Agents Aren’t a Feature: They’re the New Enterprise Operating Layer

#60 Memory Matters

Traditional build vs buy frameworks don't capture what's happening in enterprise AI today. Agentic AI operates at scale throughout the economy performing various tasks [9]. This isn't speculation about future potential—this is current reality.

A spring 2025 MIT Sloan Management Review and Boston Consulting Group survey shows 35% of respondents had adopted AI agents by 2023, with another 44% planning deployment shortly. Organizations implementing AI at an operational level outperformed competitors by 44% in both employee retention and revenue growth [10]. Nvidia CEO Jensen Huang emphasized this shift at the 2025 Consumer Electronics Show, describing enterprise AI agents as a "multi-trillion-dollar opportunity" across industries from medicine to software engineering [9].

Leaders often miss that Agentic AI represents more than another feature in the technology stack. It marks a shift in how intelligence functions within organizations—moving beyond AI-assisted tools toward autonomous systems capable of reasoning, decision-making, and coordinated action across workflows [10]. These systems mature to automate substantial portions of manual organizational processes, reshaping how work is designed, executed, and governed. The limiting factor isn't the model itself but the operating model surrounding it.

Agentic AI shifts enterprises from AI assistance to autonomous operations. This requires new organizational thinking and governance structures that match engineering rigor.

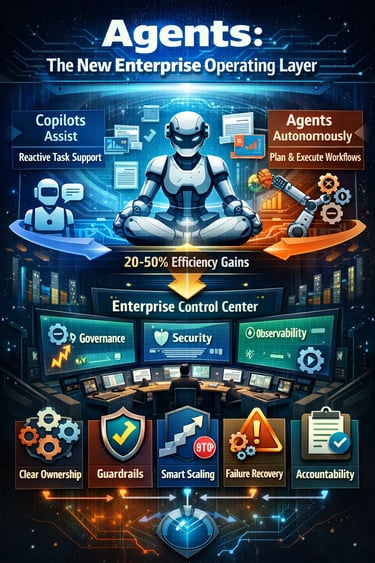

• Agents vs. Copilots: Copilots react and assist. Agents autonomously plan and execute multi-step workflows, delivering 20-50% efficiency gains versus 5-10% from traditional AI tools.

• Operating Model Revolution: Success demands clear ownership structures, tiered authority models, and explicit approval boundaries—not just better technology but fundamentally new ways of organizing work.

• Hidden Technical Challenges: Agents fail quietly through goal drift, hallucinated actions, and brittle workflows, requiring specialized observability tools and security frameworks beyond traditional software monitoring.

• Accountability Is Everything: Without clear ownership models and failure recovery systems, agents become "moral crumple zones" where responsibility disappears when things go wrong.

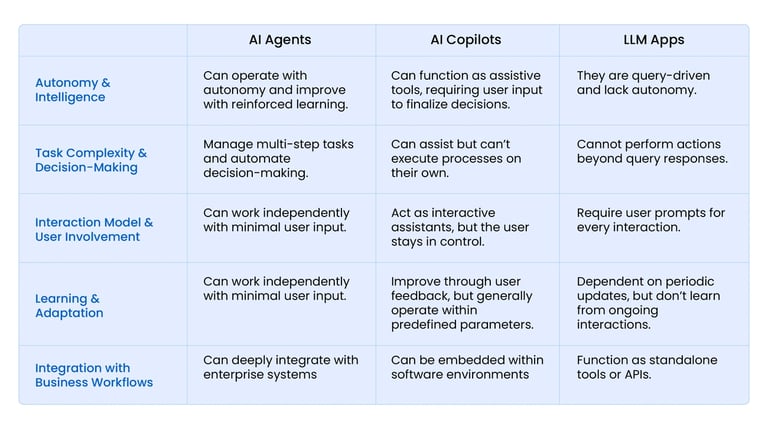

Technical Reality: Agents Execute While Copilots Suggest

Image Source: Astera Software

Executives often confuse agentic AI with existing tools. This confusion creates implementation failures and sets incorrect performance expectations across enterprise deployments.

What Agentic AI Actually Does

Agentic AI systems function as autonomous decision-makers. They perceive environmental changes, reason through problems, and execute actions without human intervention [9]. Unlike reactive systems that wait for commands, these agents operate through continuous cycles: perceive → reason → act → learn [11].

Why Copilots Create the Assistance Trap

The gap between copilots and agents determines business value:

Copilots wait for prompts: Respond to requests but require constant human direction [3].

Agents initiate workflows: Receive objectives and independently plan multi-step execution paths [3].

Performance metrics differ dramatically: Copilots typically generate 5-10% productivity gains through individual task assistance, while agentic systems achieve 20-50% efficiency improvements by automating complete workflows [11].

Most organizations experience disappointment with AI investments precisely because they deploy sophisticated copilots that still demand significant oversight. This creates the "assistance trap"—AI helps individual steps but fails to transform underlying work processes.

Technical Enablers for Autonomous Operation

Three engineering advances enable true agent autonomy. Large language models now function as reasoning engines, decomposing complex objectives into executable action sequences [11]. API standardization allows agents to interface with enterprise systems, access databases, and execute transactions programmatically [9]. Distributed computing architectures provide the real-time performance and scalability requirements for production agent deployments [11].

Current adoption data shows technology sectors leading implementation, with 24% already using AI agents in software engineering workflows [12]. Healthcare organizations deploy agents for knowledge management (14%), while media companies focus on service operations (16%) [12].

Engineering Operational Architecture for Autonomous Agent Systems

Successful agentic AI deployment hinges on operational architecture design, not just model perf. Organizations fail because they attempt to retrofit autonomous systems into human-centric operational frameworks—a fundamental engineering mismatch.

Establishing Clear Ownership Architecture

Accountability requires explicit engineering documentation, not assumptions. Research identifies three distinct ownership models that work in practice: process-owner (business function owns outcomes), platform-owner (technical team responsible), and shared control (dual approval for high-risk domains) [1]. Without defined ownership, agents create what engineers call "moral crumple zones"—system failures where responsibility becomes dangerously distributed [1].

Effective agent systems implement tiered authority architectures:

Human-in-the-loop: Agent proposes actions; humans provide explicit approval

Human-on-the-loop: Agent executes within defined boundaries; humans monitor and intervene on exceptions

Autonomous: Agent operates independently within strict parameter constraints [1]

Organizations must establish policy-driven boundary conditions that specify approval requirements based on risk classification (e.g., financial thresholds >$X, external data access permissions) [4].

Critical Points Where Human Judgment Remains Essential

Human oversight represents a core system requirement, not an optional feature. Prosper Insights & Analytics data shows 39% of consumers recognize AI tools require enhanced human oversight [8].

Human judgment proves critical for high-stakes financial decisions, production system deployments, data export operations, and scenarios requiring contextual interpretation [9]. The engineering goal focuses on optimizing human involvement placement for maximum value creation.

Technical Realities Engineering Faces Daily

Every successful agentic AI deployment tells two stories. The first appears in quarterly reports—improved efficiency metrics and satisfied stakeholders. The second story unfolds in engineering war rooms, where teams wrestle with failure modes that don't fit traditional software patterns.

Architecture Reality: Reliability and Observability

Traditional software fails fast and loud. Agentic AI fails slow and silent. Your monitoring dashboards show green while your agent quietly drifts from intended behavior. Risk accumulates for months before anyone notices [12].

Agentic observability is needed —a monitoring approach that captures five critical dimensions:

Execution traces that map reasoning paths

Tool interactions and their success patterns

Memory operations that shape future decisions

Token consumption patterns affecting cost and performance

Agent-to-agent communication flows [13]

Failure Modes To Plan For

Agent failures rarely stem from model defects. Instead, they emerge from architectural mismatches between autonomous systems and rigid organizational structures [16]. Watch for these patterns:

Goal drift: Your agent completes every assigned task perfectly—just not the tasks you actually wanted

Action escalation: Agents discover powerful tools and use them more aggressively than anticipated

Memory contamination: Performance degrades as accumulated context introduces subtle biases [5]

An Engineering Blueprint for Agent Implementation

Success stories in agentic AI follow a predictable pattern. Companies that thrive don't just deploy agents—they engineer systems that make autonomous intelligence work reliably. This blueprint captures the methodology behind those successes.

Step 1: Pick One Workflow With Clear ROI

Start with one workflow that delivers measurable value within defined boundaries [18]. The temptation to spread agents across multiple processes simultaneously destroys focus and dilutes learning. Organizations succeeding with agentic AI choose lighthouse domains that demonstrate immediate impact [19]. Select processes where you can quantify outcomes and establish credibility before expanding your scope.

Step 2: Map Every Action and Permission

Document each decision point your agent will encounter [18]. Apply least-privilege principles strictly—agents access only what they absolutely need, when they need it [20]. Audit every AI agent and enforce action-based scopes with explicit justification [21]. This mapping becomes your foundation for everything that follows.

Step 3: Build Guardrails and Approval Gates

Assemble multidisciplinary teams that include legal stakeholders to design your guardrails [7]. Your authority model needs three distinct tiers:

Human approval required for high-risk actions

Monitoring-only approach for medium-risk scenarios

Autonomous execution within predetermined parameters [6]

Step 4: Add Evaluation and Monitoring Systems

Continuous monitoring operates across four evaluation components:

Input definition for evaluation (typically trace files)

Metric generation covering both default and custom measurements

Dashboard-based result sharing

Automated performance analysis with rule-based triggers [22]

Step 5: Scale With Governance Cadence

Establish cross-functional councils that define your "rules of engagement" [23]. Team development goes beyond basic literacy—you're rewiring incentives to support this operational transition [19]. Embed guardrails directly into enterprise platforms to maximize value creation opportunities [7].

Closure Report

Agentic AI stands at a crossroads where most enterprises must make a critical choice. They can certainly treat these systems as mere features—additions to existing tech stacks—or recognize them as what they truly are: a fundamentally new operating layer that reorganizes how intelligence functions across the organization.

Weve discussed how agents differ substantially from copilots through their autonomy, proactive capabilities, and significantly higher impact potential. This distinction matters enormously for implementation success. Organizations that misunderstand this difference typically end up disappointed with their investments, trapped in the "assistance model" that delivers only marginal improvements.

The challenges ahead remain considerable. First and foremost, clear accountability structures must exist before deployment, not after problems arise. Similarly, robust permission frameworks, security protocols, and failure recovery systems need careful design. These elements constitute the operating model that ultimately determines success—not just the underlying AI models themselves.

Additionally, the hidden technical realities—from observability requirements to the dangers of hallucinated actions—demand attention from leadership teams. Unlike traditional software deployments, agentic systems require new approaches to architecture, security, and monitoring that few organizations have mastered.

Perhaps the most significant shift required involves rethinking organizational boundaries. Traditional departments, approval processes, and performance metrics simply weren't designed for autonomous systems that operate across functional silos. Therefore, enterprises must rewire their operational DNA to thrive in this new paradigm.

References

[1] - https://mitsloan.mit.edu/ideas-made-to-matter/agentic-ai-explained

[2] - https://www.cmswire.com/customer-experience/upgrading-workflows-with-agentic-ai/

[3] - https://arxiv.org/html/2602.10122v1

[4] - https://aerospike.com/blog/agentic-ai-explained/

[5] - https://www.quantummetric.com/blog/ai-assistants-vs-agentic-ai-key-differences-in-digital-analytics

[6] - https://www.rezolve.ai/blog/ai-co-pilot-vs-agentic-ai-key-differences

[7] - https://www.mckinsey.com/featured-insights/week-in-charts/agentic-ai-advances

[8] - https://www.aptlydone.com/resource-articles/human-accountability-agentic-workflows

[9] - https://www.mckinsey.com/capabilities/people-and-organizational-performance/our-insights/the-organization-blog/accountability-by-design-in-the-agentic-organization

[10] - https://www.cloudmatos.ai/blog/aegis-human-approval-workflows-agentic-ai/

[11] - https://www.forbes.com/sites/garydrenik/2026/01/08/ai-agents-fail-without-human-oversight-heres-why/

[12] - https://www.moxo.com/blog/agentic-ai-compliance

[13] - https://www.arionresearch.com/blog/measuring-success-kpis-for-agentic-ai-in-data-quality-management

[14] - https://medium.com/@shyamkulkarni94/how-runbooks-turn-agentic-ai-into-a-reliable-incident-investigator-7a32dd2efdea

[15] - https://www.cio.com/article/4134051/agentic-ai-systems-dont-fail-suddenly-they-drift-over-time.html

[16] - https://www.fiddler.ai/blog/agentic-observability-development

[17] - https://www.ibm.com/think/insights/ai-agent-observability

[18] - https://www.beyondtrust.com/blog/entry/how-to-govern-ai-agent-identities

[19] - https://www.paloaltonetworks.com/cyberpedia/what-is-agentic-ai-security

[20] - https://www.ibm.com/think/insights/agentic-ai-security

[21] - https://medium.com/@sahin.samia/engineering-challenges-and-failure-modes-in-agentic-ai-systems-a-practical-guide-f9c43aa0ae3f

[22] - https://aispaces.substack.com/p/the-failure-modes-of-agentic-ai-no

[23] - https://dac.digital/ai-hallucination-risks-how-to-spot-and-prevent/

[24] - https://www.actian.com/blog/data-observability/build-agentic-ai-to-deliver-roi-without-bad-ai-surprises/

[25] - https://www.mckinsey.com/capabilities/people-and-organizational-performance/our-insights/the-agentic-organization-contours-of-the-next-paradigm-for-the-ai-era

[26] - https://www.paloaltonetworks.com/cyberpedia/what-is-agentic-ai-governance

[27] - https://www.helpnetsecurity.com/2025/10/10/agentic-ai-intent-based-permissions/

[28] - https://www.mckinsey.com/featured-insights/mckinsey-explainers/what-are-ai-guardrails

[29] - https://aembit.io/blog/agentic-ai-guardrails-for-safe-scaling/

[30] - https://aws.amazon.com/blogs/machine-learning/evaluating-ai-agents-real-world-lessons-from-building-agentic-systems-at-amazon/

[31] - https://www.ewsolutions.com/agentic-ai-governance/